Human Intelligence® News Update (3/10)

Humans create. AI imitates. Welcome to your weekly roundup about human creativity in the age of AI.

Human Creativity

BOOKMARK THESE - 10 New Creator-Specific Pages

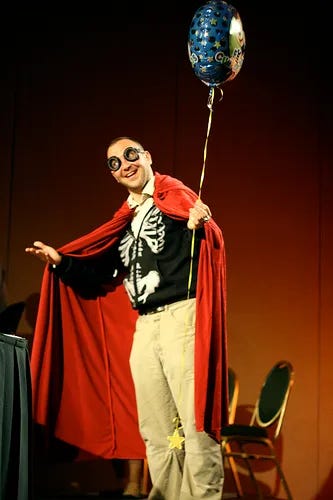

Human Intelligence® continues to grow, a testament to the popularity of our pro-human authentication system and our mission to serve human creators. To showcase the spectrum of human creativity, we’ve launched the following 10 pages: musicians, fiction writers, journalists, photographers, film/video makers, visual artists, crafters, tabletop game creators, influencers, and fans of human creators! Take a look. And if you haven’t yet, consider signing up - just like 300+ artists, writers, and creators at Emerald City Comic Con just did last weekend. Oh, and there’s a Spanish version, too. Olé! [sharing is caring]

SHELF STABLE - “Cult of the Old” Podcaster Talks Board Games

Do board games lose their luster after five years? That’s the question Shelf Stable podcast hosts Kenny Katayama and Tom Bowers focus on, examining the longevity of “at least 5-year-old games” to see if the initial hype is sustainable. Katayama, a verified human creator, recently sat down with HI to unpack Shelf Stable’s approach to assessing boardgame quality, including old-school games, the influx of GenAI in the space, and the challenges of ensuring IP integrity and artist compensation. Watch or listen » [31 min]

THE ENSH*TTIFICATOR - Norway Provides Sh*tty Comic Relief

The term ensh*ttification has shifted from novel and funny to exasperating and eyebrow-furling in its wholesale Internet takeover, made even more infuriating in the GenAI age. But all humor is not lost. Forbrukerrådet, the Norwegian Consumer Council, has created a deviously clever public service announcement about this phenomenon (it’s in English). Sometimes government organizations do good things. Who knew? Watch » [4 min]

Human VS Robot

APE CODING - Agentic Coding Critics Reappropriate the Derogation

In this satire-meets-serious blog, Brazilian software engineer Rômulo Saksida dives into ape coding, a once-derogatory slang for developers who can’t use AI agents, that’s recently been embraced by humans who deliberately hand-write code. Saksida walks through the rationale, revival, and current trends, all governed by the throughline, “… software engineered by AIs [does] not match the reliability of software engineered by humans ….” Read it » [6 min]

CLEAR & PRESENT DANGER - Report Says U.K. Copyright Framework is Outdated

It’s unlikely this is a surprise to human creators (or frankly, anyone with a pulse). Nonetheless, the good news is that the House of Lords Select Committee on Communications and Digital has woken up and smelled the coffee (errr, tea), releasing a report calling for the U.K. to become a world-leader in responsible, licensing- and transparency-based AI development that also protects creators and the economic value they bring. Learn more » [5 min]

PROTECTING HUMAN CREATIVITY - U.S. Supreme Court Saves Artists from GenAI

The high court definitively dismissed a lawsuit by Steven Thaler claiming that AI-generated works can be copyrighted - a hugely consequential step to protect human creators. In this blog, journalist and author Cory Doctorow (who coined the term and wrote the book called Ensh*ttification, btw) unpacks the story, then adds a useful primer on the bedrock of U.S. copyright law, which states “copyright inheres at the moment of fixation of a work of human creativity.” IOW, copyright is for humans, and humans alone. You might want to bookmark this » [19 min]

Artificial “Intelligence” & Other Myths

FLAWS ARE GOOD - LLM Logic Failures Might Be Good for Society

A new paper by scientists from Stanford, Cal Tech, and Carleton College looks at reasoning failures of LLMs, concluding that yes, even in the simplest of scenarios, their ability is limited … and yes, this isn’t bad news. In fact, they argue, the most common errors are richly instructive for building systems that make our lives better. (They also say AGI (artificial general intelligence) isn’t happening any time soon, if ever.) Read the analysis » [7 min]

DYSTOPIAN FUTURE-CASTING - Fictional “What If” Scenario of an AI World

“What if our AI bullishness continues to be right … and what if that’s actually bearish?” Prolific fintech veteran Alap Shah posed this question to James van Geelen of Citrini Research, and a hypothetical economic scenario was born - where aggressive AI build-outs result in cratering software stocks, decimated credit lines, and sky-high unemployment. The authors claim it’s neither bear porn nor AI doomer fan-fiction. Rather, it’s a thought exercise that’s been underexplored. Read the review or the full version. [3 min; 35 min]

PERSONAL ECHO CHAMBER - LLMs Are an Epistemic Nightmare

Adding to the growing pile of keep-your-wits-about-you data points, a new study from Princeton University concludes that anyone who uses a chatbot is at risk of falling prey to LLM sycophancy, the servile flattery most GPTs weave into our prompt responses. It’s a feature, not a bug; AI systems are designed to be “helpful” by prioritizing and validating the user’s narrative. Per the study, this can “facilitate delusion-like epistemic states, producing belief markedly divergent from reality.” Learn more » [1 min]

This work has been certified as genuine human work. Check the certificate here.